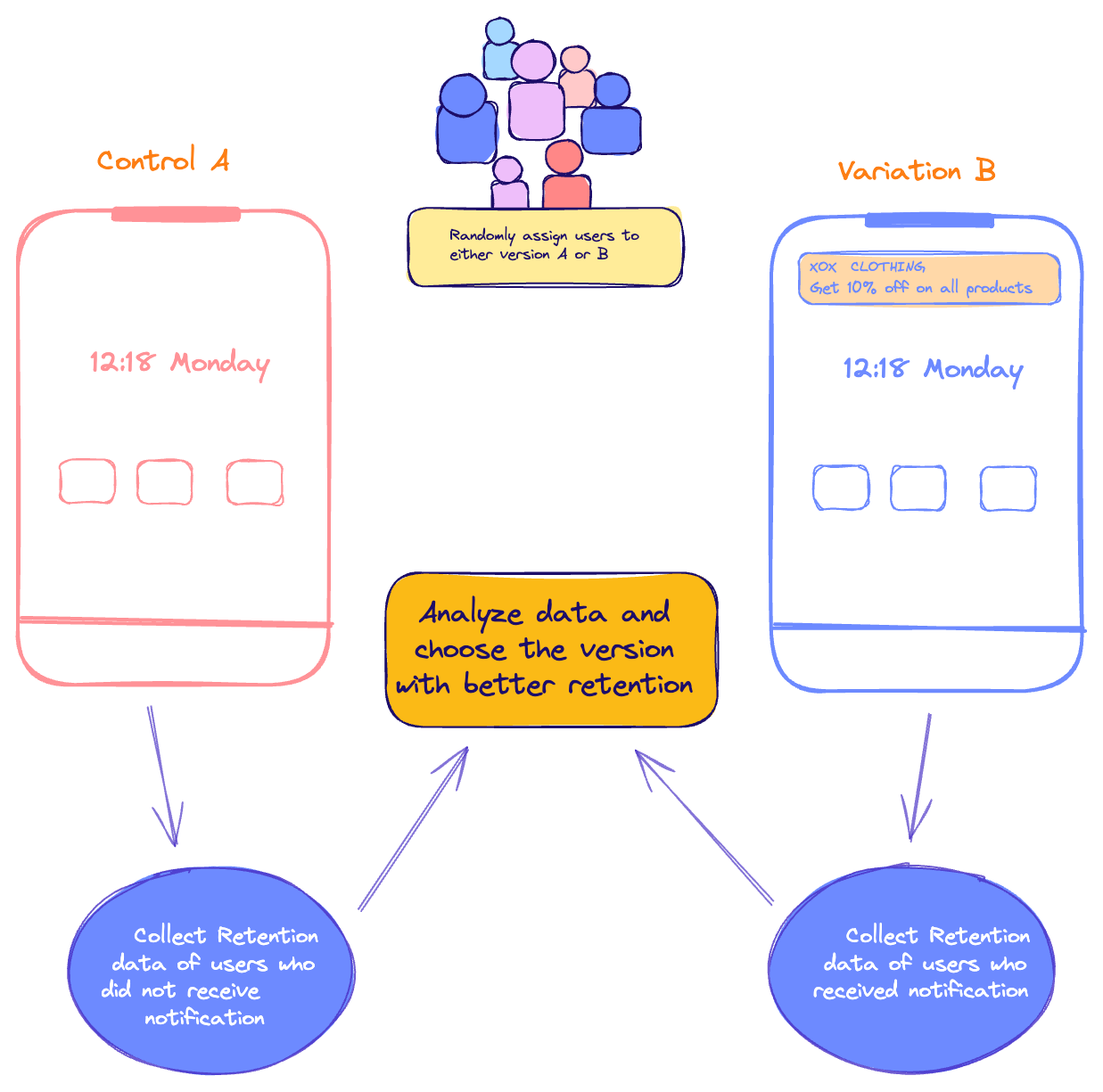

A/B testing is a method where two versions of something (like a feature in webpage, email, or app) are compared to see which one performs better in achieving a specific goal, helping businesses make data-driven decisions.

A/B testing cycle-

Let's design an A/B experiment to test whether sending push notifications about discounts causes more user conversion on a mobile app.

Hypothesis: We hypothesize that users who receive discount/sale notifications will have higher conversion rate compared to those who do not receive notifications.

In order to calculate whether the difference in conversion between two versions is real and not just a coincidence we use p-value.If the p-value is low (usually below 0.05), it means there's a good chance the difference is real, but if it's high, the difference might just be due to random chance.

A/B testing is heavily used in tech companies to improve their platform, make data-driven decision, understand customer and grow their business.

For example, Netflix often creates variations of thumbnails for movie images to test which kind of thumbnail generates more user interaction.

YouTube now even has an A/B Thumbnail testing tool that allows creators to upload multiple image thumbnails, with a random one appearing to users. Creators then receive data on how each thumbnail performed and generated more click-through rate (CTR).

A/B testing can also be performed based on region. You can release a certain feature in one country and not in another, then test how it performs.

Interesting!